What Worlds Are We Building With Our Charts?

My mother worked for the local historical society while I was growing up, so I spent a lot of time in the 1815 historic house that was the group’s headquarters and main touring location. Docents would wear their period-appropriate hoop skirts and take people through the parlor and the master bedroom and the grand staircase. The slave quarters, tucked away in a hallway on the upper floor, was one of the last parts of the tour. You reach them through a back stairway (originally a trap door with a ladder), so the enslaved people who worked in the house would not be seen using the front stairs. Often the docents would leave the tour, as the stairs were too narrow for hoop skirts (the enslaved women, of course, would not have worn hoop skirts). “Careful on the stairs,” they would say, because the treads were just slightly too close together, with no space for missteps.

There are a lot of assumptions about the world packed into that staircase. And, once built, the staircase acted to reinforce the parameters of that world. Who could be where, what they could wear when they were there, what sort of bodies and relations and duties they had to have; all of these prescriptive relations and identities were integrated into the architecture of the building and then shaped the lives of the people who used the building from then on. These architectural politics are omnipresent, from massive scales (the ways that cities were built around railroad lines versus highways, suburban sprawls versus urban cores, redlining and sundown towns) and down to the tiniest detail (keeping with the theme, I’m told there’s a historic house in South Carolina where the door to the parlor where the men would enjoy post-prandial drinks and cigars was intentionally made narrow to keep women and their hoop skirts out: a sort of antebellum attempt at a “man cave”).

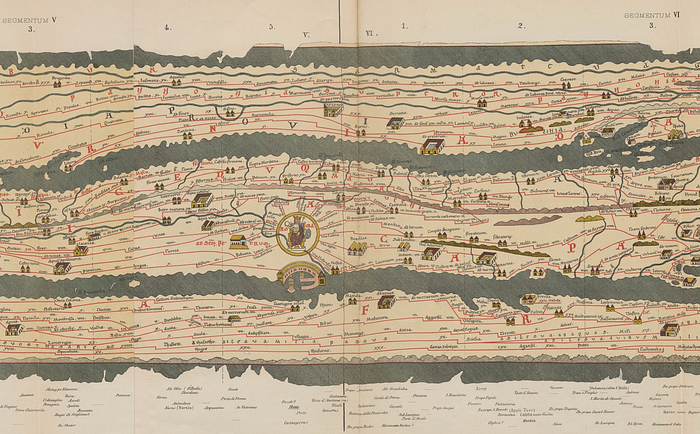

Similar anecdotes fill the pages of Walter Benjamin’s unfinished The Arcades Project, built up of quotations, short commentaries, and other snatches of prose and poetry about the architectural history of Paris. The titular covered arcades connected the blocks of Paris with canopies of glass that allowed one to stroll from place to place while avoiding the busy streets. Cafés and hawkers could fill those liminal spaces, and the parallel structures across large swathes of the city allowed one to walk in an almost hypnogogic state from place to place without mindfulness or objective. Benjamin ties these arcades to the archetype of the “flâneur,” the idle urban wanderer who to some extent could not exist in earlier versions of the city. Similarly, he argues, given the Parisian catacombs, with their vermicular subterranean winding and miles and miles of unmapped corridors, is it any wonder that the history of Paris is full of smugglers, revolutionaries, and secret societies? Across the Atlantic, Robert Caro’s The Power Broker: Robert Moses and the Fall of New York tells stories about how simple decisions like the height of bridges (too short for buses but not for private cars) codify and reinforce racist and classist notions of what a city should be, and how its citizens should act.

Doctrinaire historical materialism would suggest that it is these material conditions, these technological and physical structures, that shape the course of societies at a more fundamental causal level than our stated ideologies or the actions of our heroes. You don’t have to sign up for all of that project, but it seems to me that, at the very least, our ideologies shape how we build things, and the way things are built shape our ideologies. “Build things,” here, refers not just to our houses and cities, but our infrastructure, our institutions and certainly our software. The charts and graphs we produce as analysts, researchers, designers, journalists, or even as “information flâneurs” all have this ideological bent, and reflect the world that created them, and shape the world that comes after them.

I am therefore using this space to pose the following question:

What sort of worlds are we building with information visualization?

With, as per the usual nature of questions like these, the answer being “probably not worlds we actually want to live in.” An extended example arises in Sarah T. Hamid’s work pushing back against carceral technologies:

How we know, the way we know, our epistemic practices, are a political decision. They enroll us in technological and research traditions and transform our relationship to both the object of inquiry and the intention behind it. I remember this one moment when CTRN was archiving different policing program grants. We were working in a spreadsheet. There were blank cells in the spreadsheet, and we became obsessed with filling them in. And then after a week we were like, “Why are we doing this? Why are we so obsessed with having a complete spreadsheet?” We started to realize that our way of knowing and our mode of inquiry were being influenced by the nature of the spreadsheet. It wasn’t curiosity, or any real need to find the information. It was the structure of the technology.

Knowledge takes a particular shape when you start to use particular mediums. So it’s important to continuously reassess how your knowledge is being shaped because, at the end of the day, if you give into what the technology wants, then your work just becomes police work. Your organizing work just turns into a project to surveil the police, you cultivate a need to satisfy each blank cell, you strive for total information. You start to take on the state’s paranoid affect. You can lose yourself in that.

Just as with the staircase and the doorframe, the spreadsheet appears to be another tool that was created with a certain view of the world in mind (you have a grid of data that you need to “fill in” with precise values: Excel cannot abide a vacuum) that creates or reinforces a particular activity (finding more data to complete the panopticon, rather than being satisfied with uncertainty or unknowns) that acts a bit like an intellectual contagion. Hamid was working specifically on the injustices that arise from surveilling too much, and still noticed this impact: imagine how it might impact those of us who are less vigilant about these sorts of epistemic commitments.

Shannon Mattern does a lot of thinking and writing about the politics of how and what we classify and “data-ify.” A particular term of hers that I keep thinking about is that of “aspirational ontologies”— ways of structuring the world based on the how we want to shape it rather than existing structures and categories given to us. One reading that reoccurs in her syllabi is Borges’ The Analytical Language of John Wilkins which describes an encyclopedia with absurd subcategories of animals including those that are “fabulous,” “having just broken the water pitcher,” or those “that from a long way off look like flies,” etc. Borges’ encyclopedia shows both the arbitrariness of our classification of the natural world as well as the extent to which those projects are (doomed by?) specific human interests rather than objective structures of the universe. Similarly, Johanna Drucker’s keynote at IEEE VIS points out how the human perspectives we take can “distort the grid” of presumed objective structures like maps and timelines: one of her examples was that second before you kiss someone for the first time is just a qualitatively longer and different sort of second than other sorts of seconds.

That the act of collecting data, per se, results in these sorts of distortions is well-known. Marilyn Strathern even codified it in her generalization of Goodhart’s Law: “when a measure becomes a target, it ceases to be a good measure.” Our behavior warps and twists to optimize the measure rather than the thing we’re measuring. E.g., if the measure of “how good of a tech support worker is this person?” is “how many support cases do they close?” and this measure is connected to hirings and firings and salary, then you might end up creating a tech support organization that focuses on easy problems that are quick to solve, leaving the people with the harder problems to languish (or passing them around like hot potatoes to other people or departments). To return to the subject of data-driven policing, if you’re trying to optimize police placement, then placing police in a “high crime” area results in more scrutiny, which uncovers more crime than would otherwise be detected, which results in the region appearing to be an even higher “high crime” area which results in more police being assigned etc. etc., a vicious spiral or “ratchet effect” that widens the gulf between over-and under-policed neighborhoods. The way we view the world shapes the data we collect which shapes the world we create. It’s why Drucker uses terminology like “capta” (denoting something created or taken or constructed) in opposition to the notion of “data” (as something given or observed or otherwise objectively true about the world).

I do not mention all of this to motivate some naïve postmodern strawman about how nothing is true and everything is meaningless, rather I just want you to consider how different perspectives situate and contextualize data. For instance, in my grandmother’s kitchen in her home up at a top of a spur of the Blue Ridge Mountains, we would have to account for how the elevation impacted things like proofing or baking times. The apparently solid numbers and “algorithms” on, say, the back of a box of cake mix looked less solid when we put them into practice. Similarly, the KPIs in your dashboard or the values in the infographic in your newspaper are likely generated by specific people with specific contexts that will not have universal accuracy or applicability. Nobody (or, okay, probably very few people) wake up in the morning and say (optionally while twirling a mustache), “I am going to make biased data visualizations today.” Still, we are limited by our perspectives, shaped by particular agendas, or working towards particular goals, all of which can influence what kind of data we collect, value, or share. These influences can be subtle or impossible to quantify but they are never absent. The project of “de-biasing” data to make it “fully objective” is just sort of intrinsically doomed, but the project of making our perspectives visible and open to interrogation, or finding common ground with different groups, might not be.

Even once we have collected our data, I still think there are a lot of things in the way we design or present or think of data visualizations that perform these sort of distortions. Although, again, it’s not like there is some mythical “undistorted” visualization that solves all these issues: we’re choosing amongst ideological perspectives, not ridding ourselves of them.

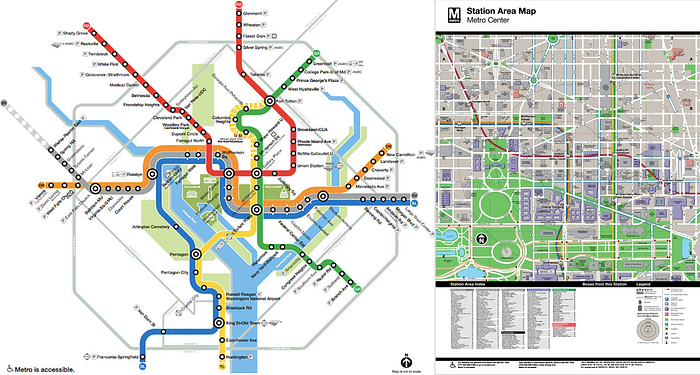

For instance, in the DC metro (and in pretty much every other transit system around the world, but this is the one I grew up with, so let me have this) there are two kinds of maps, often juxtaposed next to each other in plinths by the entrances and exits of stations. The topological “system map” is for the person who is going underground, who must remember the count of stations or where lines intersect to get to their intended destination. The subterranean world they navigate doesn’t care much about the exact geographic location of or distance between stations (maybe they want a few landmarks like the Potomac River or the National Mall, but that’s it), but rather what is connected to what and where. The “exact” geographical information about stations is simplified and abstracted away. The “neighborhood map,” by contrast, shows the immediate blocks around a particular station in great detail and geographic fidelity. These are for the people emerging from the underground to revisit the surface world, where we suddenly care about how many blocks it might be to our destination when we ignored miles and borders before. And note that the range of this detail is limited to a dozen or so blocks on each side: the intended viewer is meant to be thinking more about walking or biking distances rather than cars or buses. The way you are “meant” to interact with the metro system and the city it serves is encoded in the design of information about the system.

Here’s a more trivial example, just as a working exercise:

What sort of world is this chart conveying, explicitly or implicitly? Some examples that come to mind, but are by no means the only things going on:

- The title (“Sunny Outlook for WidgetCo”) combined with the positive slope of the profits indicate that the viewer should interpret this chart as good news for WidgetCo. Maybe we didn’t notice that, while profits are increasing year to year, this rate of increase is slowing. Hidy Kong and some other researchers have done studies about using chart titles to play these kinds of games with framing, and it does seem to have an impact on what messages we retain from the data.

- We don’t have any other companies or metrics for context. Maybe profit is good but other measures of company health or competitiveness are down. Maybe WidgetCo is a big polluter or contributor to all sorts of social ills and “profit” fails to capture the negative social and monetary impact their growth has on their community. The title and measure don’t leave a lot of room for those sorts of analyses.

- The plotted values plus the range of the y-axis ($0–3 million) give us an implicit scale for what values we should expect. For instance, if I told you that the profits in 2004 were $100 million or $1 billion, you might want to know what on earth happened to the widget market, or maybe question the validity of the data, even though you knew nothing about WidgetCo before I showed you this graph. You’d presumably have fewer questions if the 2004 profits were, say, $2.5 million, just because of the expectation-setting I’ve done with the axis here: our choice of y-axis influences the sort of effects we care about. Negative values don’t even fit in the graph: the assumption embedded in the axis is that profits are always positive. I also wonder if the scales communicate expectations about growth and profit-seeking being an unbounded and endless journey: if this were a series of pie or donut charts (of market share, say), the visual metaphor of having a percentage of a whole would communicate different ideas about growth and success, or perhaps suggest an upper limit for widget sales.

- The use of bars with nice flat tops, in a clear background without much in the way of extraneous visual elements, communicates both certainty and precision. If I had used some other chart design that allows the viewer to experience uncertainty (say, what Jessica Hullman calls “the disco funk of uncertainty visualization” by having values move around in accordance with their estimated variability) then I might have a very different idea about how reliable the increases in profits are over time. Bar charts aren’t really for ranges or guessing, and so the world they depict appears a touch more objective or black-and-white than it likely is once you investigate.

- Similarly, I made this graphic using a big honking piece of software (Tableau, if you were curious). If I had drawn this on the back of a cocktail napkin in sharpie, or on butcher paper with a box of crayons, you might have different expectations about what I was trying to communicate or how seriously I expected you to take individual values. A nice and precise mechanically- or computationally-generated axis encourages the user to make precise divisions or estimates of bars: a hastily hand-drawn or impressionist chart would not encourage similar scrutiny or calculation. The visual properties of this chart could lend it a perceived sense of ownership or authority as well. It’s why organizations are devoting lots of brain power and ink towards nailing down their “visualization style”: branding works!

I could go on, but I think you get the point: this humble little bar chart still manages to do quite a bit of implicit prescriptive and descriptive communicative work, work that may have very little to do with the actual data behind the chart. Some of this work I (as a designer and data architect) did intentionally, some of it was done by the designers of the tool I was using (say, through defaults or recommendations), some of it was done by societal or cultural projects (like a trust in expertise or “the data”) over which I have very little control. Most of this work you might not have noticed if I hadn’t asked you to think about it or otherwise pointed it out; some of this work you don’t care about even now that I’ve pointed it out. But it was work all the same, and I don’t think it would hurt us too much to have a bit more intentionality about what work we’re doing and why, especially when the stakes are higher.

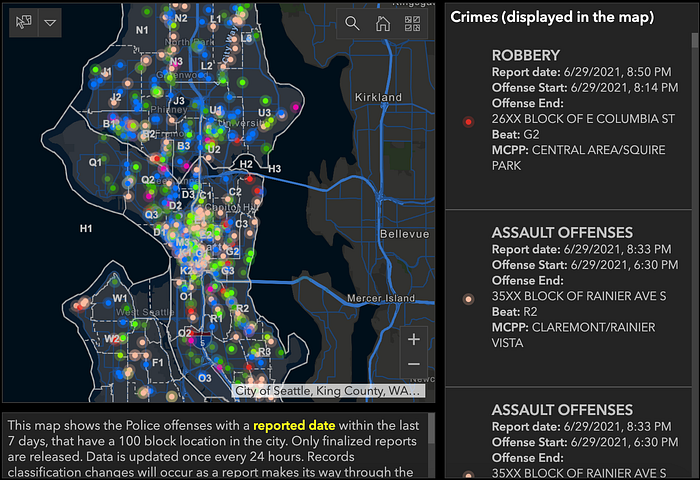

An exercise that I’ve tried out with students (and in a handful of papers, so apologies if you’d heard me talk about this ad nauseum) is to look at the “crime maps” that city governments often provide, and what sort of stories they tell. Here’s one from Seattle, for instance:

The world of this chart is one of safe or unsafe neighborhoods, with almost no area entirely “safe” (in terms of being free from reported crimes). And it’s hard to disentangle the count or presence of incidents from the rates. I can see there are a lot of crimes in downtown Seattle, but I don’t know if it makes it more or less dangerous to live there; there are also way more people in those areas. And of course, even though there are different colored categories for different kinds of crime, in the summary map it appears as one big technicolor monolithic blob, regardless of the damage or suffering each dot entails. And, as with the ratchet effect I mentioned above, it’s hard to disentangle the raw reports I see here with the unequal ways that Seattle is built and policed (poverty, race, and police presence is just as invisible here as population density). And the default window is just seven days of reports! It’s very hard to get a historical view of whether “the situation” is better or worse than it was a year or a month ago. In short, I think the world of this map is one of crisis, where “crime” as a monolithic entity is omnipresent and inescapable in the city. There are other charts (of trends, of relative rates, of risks) that could have created a world that looked very different. An “aspirational ontology” about crime and policing I think would generate a different looking visualization even based on the same data.

This is all to say that you should think about the charts you make, and the worlds that they entail. Who lives in them, and who doesn’t? What worlds are they working towards? All sort of design choices we make (or fail to make) consciously or unconsciously shape the world of the chart. Who is included (or excluded) in the data? What do we measure or not measure (or, to put things more pithily, “what gets counted, counts”)? What expectations about the shape and the extent of the data (now, or in the future) are hardcoded into our designs? What kinds of patterns, and so what sorts of conclusions, are visible or invisible in our charts? How do we make better worlds?